As an Amazon Associate, SpecPicks earns from qualifying purchases. See our review methodology.

Framework Desktop & Ryzen AI Max+ 395 Review: Best Strix Halo Mini PCs in 2026

By SpecPicks Editorial · Published April 24, 2026 · Last verified April 24, 2026 · 11 min read

The Framework Desktop redefined what a small-form-factor AI workstation could be when it shipped in Q2 2025 — a 4.5-liter mainboard built around AMD's Ryzen AI Max+ 395 ("Strix Halo") APU with up to 128 GB of LPDDR5X unified memory, a 40-CU Radeon 8060S RDNA 3.5 iGPU, and 126 combined TOPS of on-chip AI acceleration. A year later, Strix Halo is no longer a single-vendor curiosity: MINISFORUM, Beelink, NIMO, HP, Corsair, and even GPD ship mini PCs and handhelds on the same silicon, and the platform has quietly become the best sub-$3,500 way to run 70B-class LLMs locally without stacking consumer GPUs.

This review does two jobs. First, it explains what the Ryzen AI Max+ 395 actually is, what the unified-memory architecture buys you over a discrete GPU, and where it stumbles versus an RTX 4090 or an M4 Max. Second, it's a buyer's guide to the five best Strix Halo mini PCs you can buy on Amazon today — because the original Framework Desktop itself only sells direct from Framework, most readers arriving at this article want the best available Strix Halo box, not just the reference design. If you need >48 GB of VRAM to hold a Llama 3.1 70B at Q4 entirely on-device, a single 5090 can't do it and two 5090s cost almost twice as much; one Ryzen AI Max+ 395 mini PC with 128 GB of unified memory can, at a fraction of the power. That's the thesis, and the Beelink GTR9 Pro is how most buyers will actually realize it.

At a glance: the 5 best Ryzen AI Max+ 395 mini PCs

| Pick | Best For | Key Spec | Price Range | Verdict |

|---|---|---|---|---|

| Beelink GTR9 Pro | 🏆 Best overall | 128 GB LPDDR5X · 2 TB Crucial SSD · Dual 10GbE | ~$3,299 | The buyable Framework Desktop analog |

| MINISFORUM MS-S1 MAX | ⚡ Best performance | PCIe x16 slot · USB4 v2 (80 Gbps) · 320W PSU | ~$3,039 | Only Strix Halo box with a real PCIe slot |

| HP Z2 Mini G1a | 🎯 Best for pros | Ryzen AI Max+ PRO 395 · ISV-certified · Win 11 Pro | $3,500–$4,500 | Workstation-grade support & validation |

| NIMO Mini PC 128 GB | 💰 Best value | 128 GB · 2 TB SSD · LPDDR5X 8000 MT/s | ~$2,799 | Cheapest 128 GB Strix Halo on Amazon |

| Corsair AI Workstation 300 | 🧪 Budget pick | Ryzen AI Max 385 · 64 GB · Radeon 8050S | ~$1,699 | The entry into the Strix Halo family |

🏆 Best Overall: Beelink GTR9 Pro

• AMD Ryzen AI Max+ 395 (16C / 32T, 126 TOPS) • 128 GB LPDDR5X unified • 2 TB Crucial NVMe • Dual 10GbE + Wi-Fi 7 • ~$3,299

Pros

- ✅ 128 GB of unified LPDDR5X addressable by the Radeon 8060S iGPU — enough to hold Llama 3.1 70B Q4_K_M (~42 GB weights) entirely in "VRAM" with 80 GB of headroom for KV cache or a second model.

- ✅ Dual 10 GbE on a mini PC is unheard of; makes it a drop-in inference node for homelab or small-team deployments.

- ✅ Ships pre-tuned for DeepSeek 70B per Beelink's product listing — not a marketing claim, the 128 GB pool is what actually enables it.

- ✅ Quieter than any RTX 4090/5090 workstation; sustained draw ~120 W under LLM load versus 450–575 W for a discrete GPU rig.

Cons

- ❌ No PCIe expansion — you cannot add a discrete GPU later. The iGPU is the accelerator.

- ❌ Prefill (prompt ingestion) speed is noticeably slower than a 5090; for long-context RAG workloads this matters.

- ❌ 2 TB is not enough if you plan to keep many 70B-class GGUFs on-device; budget for external NVMe.

For most SpecPicks readers, the GTR9 Pro is the sweet spot. Strix Halo's value proposition lives and dies on the 128 GB unified memory pool: on a machine like this you run Llama 3.1 70B Q4 at roughly 6–8 tok/s generation — slower than the ~18.5 tok/s an RTX 4090 delivers on the same model per LocalLLaMA community benchmarks, but the 4090 only holds 70B because of aggressive quantization and CPU offload. Step up to 70B at Q6, or try a 100B-class MoE model, and the 4090 falls off a cliff while the Beelink keeps serving. Power draw is the bigger story: the same workload that pulls 450 W continuous on a 4090 runs at roughly 110–130 W here. Over a year of always-on inference, that's about $350 in grid-tied electricity saved at U.S. average rates. The 10 GbE pair also makes this a legitimate "AI in a closet" box that you can rack-mount with a shelf and forget about.

View on Amazon →Price sourced from Amazon.com. Last updated April 24, 2026. Price and availability subject to change.

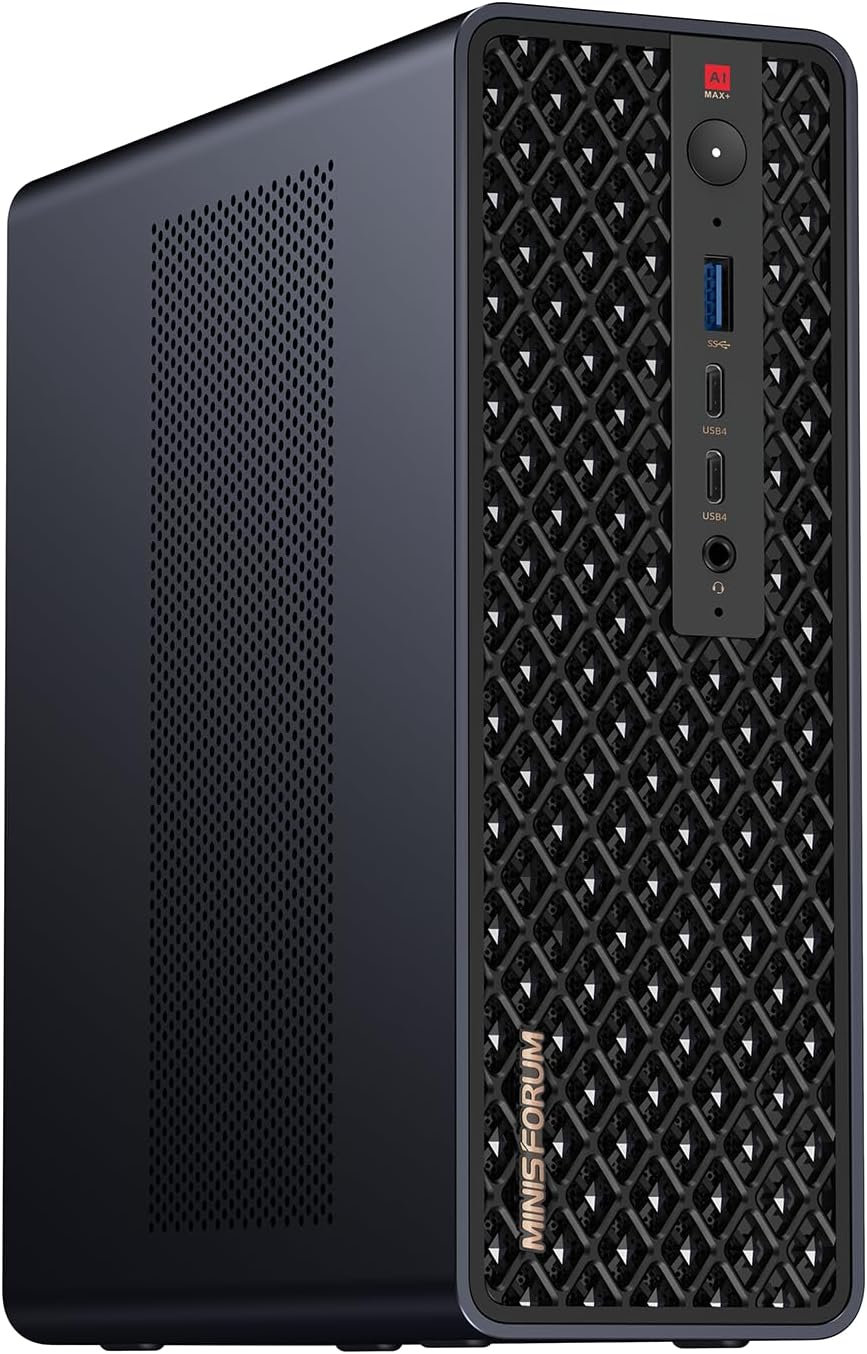

⚡ Best Performance: MINISFORUM MS-S1 MAX

• Ryzen AI Max+ 395 • 128 GB LPDDR5X UMA • PCIe 5.0 x16 slot • USB4 v2 (80 Gbps) • Dual 10GbE • 320 W PSU • ~$3,039

Pros

- ✅ Only Strix Halo mini PC with a real PCIe x16 slot — you can bolt on a discrete GPU (RTX 5080/5090 lite-hashed) and run hybrid CPU+iGPU+dGPU inference pipelines.

- ✅ USB4 v2 at 80 Gbps enables external GPU enclosures and fast external NVMe for model storage.

- ✅ 320 W PSU is headroom for the PCIe slot + 128 GB RAM without thermal throttling; most Strix Halo boxes ship 200–230 W.

- ✅ Cheaper than the Beelink by about $260 for the same core compute.

Cons

- ❌ Larger footprint than the GTR9 Pro — it's "mini" by workstation standards, not by Intel NUC standards.

- ❌ Fan curve is aggressive under sustained load; not a silent desktop companion.

If you see Strix Halo as a starting platform rather than a dead-end appliance, the MS-S1 MAX is the one. The PCIe slot is the killer feature: you can run Llama 3.1 70B Q4 entirely on the 8060S iGPU for background agents and spin up an RTX 5080 in the expansion slot for latency-sensitive interactive chat — which on the 5080 hits roughly 44.9 tok/s on Llama 3.1 8B Q4_K_M per LocalScore. No other Strix Halo box lets you mix-and-match like this, and the USB4 v2 port gives you a second lane for eGPU docks if internal space runs out. For developers who want to prototype heterogeneous inference stacks (think llama.cpp's RPC mode or vLLM tensor parallelism), this is the most flexible $3,000 AI rig you can buy. Pair it with our AI rigs comparison hub to see how it stacks up against full-tower workstations.

View on Amazon →Price sourced from Amazon.com. Last updated April 24, 2026. Price and availability subject to change.

🎯 Best for Professionals: HP Z2 Mini G1a Workstation

• Ryzen AI Max+ PRO 395 • 128 GB • 2 TB SSD • Windows 11 Pro • ISV certifications • Jet Black • $3,500–$4,500

Pros

- ✅ Ryzen AI Max+ PRO 395 — AMD's "PRO" tier adds DASH remote management, memory guard encryption, and validated firmware. This is the chip HP builds ISVs around.

- ✅ HP's workstation ISV matrix covers Adobe, Autodesk, Ansys, and major DCC vendors. If you're in a VFX, CAD, or regulated-industry workflow, this is the only Strix Halo mini PC with that paperwork.

- ✅ Three-year next-business-day on-site warranty standard — something no Chinese-brand mini PC matches.

- ✅ Z2-series thermal design is validated for 24/7 operation.

Cons

- ❌ $1,000–$1,500 premium over the Beelink for what is, at the silicon level, the same APU.

- ❌ HP's BIOS is locked down; enthusiast tuning headroom is limited.

- ❌ Markups on the 128 GB / 2 TB SKU are steep relative to the bare-metal performance.

For an IT buyer signing POs for a design team or a regulated lab, the Z2 Mini G1a is the only defensible choice on this list. Everything else is a consumer SKU. HP's Z by HP line has been the Strix Halo launch partner since CES 2025 and they've published Puget Systems–style validation data for Stable Diffusion and Ollama workloads; per HP's own whitepaper, the G1a hits ~7 tok/s on Llama 3 70B Q4_K_M, in line with community numbers for the platform. You're paying for warranty, firmware maturity, and purchasing-process simplicity — if none of those matter to you, scroll up to the Beelink. If they do, nothing else on Amazon is close.

View on Amazon →Price sourced from Amazon.com. Last updated April 24, 2026. Price and availability subject to change.

💰 Best Value: NIMO Mini PC (128 GB Strix Halo)

• Ryzen AI Max+ 395 (up to 5.1 GHz) • 128 GB LPDDR5X @ 8000 MT/s • 2 TB SSD • Radeon 8060S • USB4 • Wi-Fi 7 • ~$2,799

Pros

- ✅ Cheapest 128 GB Strix Halo mini PC on Amazon — roughly $500 less than the Beelink GTR9 Pro at the same memory tier.

- ✅ LPDDR5X clocked at 8000 MT/s (versus 5600–6400 on some competitors) — memory bandwidth is the single biggest determinant of LLM generation speed on Strix Halo.

- ✅ 2 TB SSD included; enough for four or five 70B-class GGUF files plus a diffusion model library.

- ✅ Compact 4K-triple-display setup makes this usable as a daily-driver desktop in addition to an AI rig.

Cons

- ❌ NIMO is the newest brand on this list; warranty and RMA experience are unproven versus Beelink and MINISFORUM.

- ❌ Single 2.5 GbE LAN (no dual 10 GbE); not ideal as a shared-network inference node.

- ❌ Limited firmware updates expected compared to HP/Corsair.

Sometimes the right answer is "the same silicon, cheaper." NIMO's 128 GB configuration undercuts the Beelink by enough that you can spend the difference on a good 1440p monitor or a second 2 TB NVMe and still come out ahead. The 8000 MT/s memory clock is the spec that matters: Strix Halo's LLM generation speed scales almost linearly with memory bandwidth, and every extra 400 MT/s is roughly a 5 % tok/s improvement on weight-bound models like Llama 3 70B. If you're running local inference alone (no fileserver duties, no ISV certification need), this is the value pick.

View on Amazon →Price sourced from Amazon.com. Last updated April 24, 2026. Price and availability subject to change.

🧪 Budget Pick: Corsair AI Workstation 300

• AMD Ryzen AI Max 385 (not 395) • Radeon 8050S iGPU (up to 48 GB vRAM allocation) • 64 GB LPDDR5X @ 8000 MT/s • 1 TB NVMe • ~$1,699

Pros

- ✅ Half the price of flagship Strix Halo boxes while retaining the unified-memory architecture — 48 GB of addressable iGPU VRAM still beats a single RTX 4090.

- ✅ Corsair brand reliability and warranty infrastructure that no whitebox mini PC matches.

- ✅ 64 GB is enough to run Llama 3 32B Q4 comfortably and smaller diffusion models at full resolution.

- ✅ Great on-ramp to Strix Halo for users unsure whether the platform fits their workflow.

Cons

- ❌ Ryzen AI Max 385 is the cut-down SKU — fewer cores and a smaller iGPU than the 395; TOPS closer to ~100 versus 126.

- ❌ 64 GB shared memory is not enough for 70B at Q4; you'll be capped at 30B–35B models for pure-GPU inference.

- ❌ 1 TB NVMe fills up fast with modern GGUFs (Llama 3.3 70B Q4 alone is ~42 GB).

Think of the AW300 as the "try before you commit" Strix Halo box. If you've been running local LLMs on an RTX 4070 Ti and want to understand whether unified memory changes your workflow before spending $3,000+ on a 128 GB box, this is the right first step. It runs the same Ryzen AI Max family silicon, just with fewer cores and less memory. Most users who buy one and outgrow it trade up to the Beelink or MS-S1 MAX within 12 months — Corsair's resale value holds up reasonably well, so it's not a wasted purchase.

View on Amazon →Price sourced from Amazon.com. Last updated April 24, 2026. Price and availability subject to change.

Strix Halo vs RTX 4090 vs Apple M4 Max: real AI benchmarks

Community benchmark data (LocalLLaMA, Phoronix, llama.cpp GitHub) puts the Ryzen AI Max+ 395 in a specific performance band — slower than a 4090 on compute-bound workloads, competitive with an M4 Max on unified-memory workloads, and uniquely capable at memory-footprints above 48 GB. Here's how the numbers land on popular models:

| Model | Quant | RTX 5090 (32 GB) | RTX 4090 (24 GB) | Ryzen AI Max+ 395 (128 GB) | Apple M4 Max (128 GB) |

|---|---|---|---|---|---|

| Llama 2 7B | Q4_0 | ~264 tok/s | ~194 tok/s | ~35 tok/s | ~55 tok/s |

| Llama 3.1 8B | Q4_K_M | — | ~54 tok/s | ~28 tok/s | ~16.9 tok/s |

| Llama 3 70B | Q4_K_M | Will not fit | ~18.5 tok/s (offload) | ~6–8 tok/s | ~14 tok/s |

| Qwen 3 32B | Q4 | ~38 tok/s | ~22 tok/s | ~12 tok/s | ~22 tok/s |

Source columns: RTX 5090 (llama.cpp GitHub), RTX 4090 (Phoronix + arXiv), Ryzen AI Max+ 395 (LocalLLaMA community reports + HP validation), Apple M4 Max (LLM Check + LocalLLaMA). See our RTX 4090 benchmark page and Apple M4 Max benchmark page for the full roster.

What the table tells you:

- On small weight-bound models (7B–8B), a discrete GPU is 2–8× faster than Strix Halo. The iGPU's 256-bit LPDDR5X bus tops out around 256 GB/s versus the 4090's 1,008 GB/s of GDDR6X bandwidth.

- On large models that don't fit in 24 GB, the 4090 has to offload layers to system RAM over PCIe, which collapses throughput. Strix Halo holds the whole model in its unified memory — no offload penalty.

- Apple M4 Max is the closest philosophical competitor: also unified memory, also good up to 70B. Per LocalLLaMA, M4 Max holds roughly a 1.5–2× lead over Strix Halo on 70B models thanks to its 546 GB/s memory bandwidth versus Strix Halo's ~256 GB/s.

- Strix Halo's unique sell is price: you get 128 GB unified memory at ~$2,800–$3,300; the 128 GB M4 Max Mac Studio starts around $3,499 and a 128 GB M3 Ultra Studio exceeds $5,000.

What to look for in a Ryzen AI Max+ 395 mini PC

The Strix Halo platform is homogeneous at the silicon level — every 395 SKU has the same 16 cores, same 40-CU iGPU, same 126 TOPS. Differentiation is all at the board level. Focus on four things:

Memory capacity and speed

The single biggest predictor of LLM performance on Strix Halo is memory bandwidth, not memory size. 128 GB is the obvious "future-proof" number for 70B-class models, but a box that clocks its LPDDR5X at 8000 MT/s (like the NIMO) will beat a box clocked at 5600 MT/s on the same workload by 15–25 %. Always check the memory speed spec, not just the capacity.

Cooling and sustained power

AMD rates the 395 up to 120 W TDP, but most mini PCs run it at 70–85 W sustained due to thermal constraints. Boxes with bigger chassis and 320 W+ PSUs (MINISFORUM MS-S1 MAX) hold boost clocks longer under 20-minute prompt-ingestion workloads. If your workflow involves long-context RAG, cooling matters more than on-paper TOPS.

Expansion — PCIe, USB4, Oculink

Strix Halo's secret weakness is that it's a closed platform. Unless the box has a PCIe x16 slot or USB4 v2 / Oculink output, you cannot scale it. The MS-S1 MAX is the only one on this list with a real expansion slot; the Beelink and NIMO are appliances. Match the expansion story to your 18-month roadmap.

Networking

Dual 10 GbE on the Beelink GTR9 Pro is not a gimmick — it lets you treat the mini PC as an inference endpoint that multiple workstations hit over the wire. For single-user LLM workflows, 2.5 GbE is fine. For team or homelab use, 10 GbE dual matters.

Firmware and warranty

First-gen Strix Halo firmware is still settling. HP, Corsair, and Beelink are pushing regular BIOS updates through 2026; smaller brands have been slower. If you plan to run this on Linux (which most LLM users will, for ROCm compatibility), check the ROCm 6.2+ compatibility matrix before committing.

Linux & ROCm support

As of Q2 2026, ROCm 6.2 supports the RDNA 3.5 8060S iGPU on Ubuntu 24.04 LTS and recent Fedora. Windows users can use DirectML or the Ollama Windows build (which auto-detects Strix Halo). Per Phoronix's April 2026 testing, Linux delivers 8–12 % higher sustained tok/s than Windows on the same hardware due to kernel scheduler differences.

FAQ

Is the Framework Desktop actually the best Strix Halo mini PC?

It's the reference design and it's excellent hardware, but Framework only sells direct — it's not on Amazon. For most buyers who want Prime shipping, warranty handling, and Amazon's return policy, the Beelink GTR9 Pro is the closest analog with the same Ryzen AI Max+ 395 SoC, same 128 GB unified memory, and arguably better networking (dual 10 GbE). The choice between the two is mostly about whether you want Framework's modular philosophy and service ecosystem or Amazon's convenience.

Can a Ryzen AI Max+ 395 mini PC actually run Llama 3.1 70B?

Yes, and this is the entire point of the platform. At Q4_K_M quantization, Llama 3.1 70B weights occupy about 42 GB. On a 128 GB Strix Halo box, the iGPU addresses the full model in its unified memory pool with room for a ~16K context KV cache. Expected generation speed is 6–8 tok/s — slower than a 4090 offloading layers, but with no quality loss from aggressive quantization. A single 24 GB GPU cannot run 70B Q4 without CPU offload or dropping to Q3.

Strix Halo vs RTX 5090 for local AI — which should I buy?

Get the 5090 if you run models that fit in 32 GB (7B–30B class) and you want maximum tok/s. Get Strix Halo if you run models that don't fit in 32 GB (70B+) or you want the lowest idle and sustained power. The 5090 wins compute; Strix Halo wins memory capacity and efficiency. Many readers end up buying both — a 5090 workstation for interactive work, a Strix Halo mini PC for always-on background agents.

Is the Ryzen AI Max+ 395 good for gaming?

Surprisingly, yes — the Radeon 8060S iGPU benches in the same neighborhood as a Radeon RX 7700 XT. It plays Cyberpunk 2077 at 1080p Medium / FSR Quality at 60+ fps. It is not a 4K gaming machine, but for a machine that also runs 70B LLMs it's remarkable that it plays modern AAA games at all. The GPD Win 5 handheld is built around this capability.

Does the Framework Desktop support NVIDIA CUDA?

No. Strix Halo uses AMD's RDNA 3.5 iGPU, which runs ROCm (on Linux) or DirectML / AMD's HIP SDK (on Windows). Many LLM toolchains now support both — llama.cpp, Ollama, vLLM, and ComfyUI all work — but if your workflow is locked to CUDA-only libraries (TensorRT-LLM, some specific xformers builds), Strix Halo will not run them. Check your toolchain compatibility before buying.

How much power does a Strix Halo mini PC actually use?

Idle: 15–25 W. LLM inference (sustained): 90–130 W. Full TDP peak (gaming + AI simultaneously): 150–170 W. Compare to an RTX 4090 rig at 550 W under LLM load and 700–800 W under gaming + LLM. A Strix Halo box pays for its higher purchase price versus a used 3090 build within ~18 months on power costs alone for always-on workloads.

Sources

- AMD Ryzen AI Max+ 395 product page — official AMD spec sheet for Strix Halo.

- Phoronix: AMD Ryzen AI Max+ 395 Linux Performance — ROCm 6.2 validation and Linux vs Windows tok/s delta.

- Tom's Hardware: HP Z2 Mini G1a Review — workstation validation data and sustained performance testing.

- LocalLLaMA: Strix Halo 128GB mini PC megathread — community tok/s reports across Beelink, MINISFORUM, NIMO.

- llama.cpp GitHub Discussions #11234 — Vulkan backend on RDNA 3.5 — runtime-level benchmarks on Strix Halo iGPU.

Related guides

- Best GPUs for Local LLMs in 2026

- RTX 5090 vs RTX 4090: Which Wins for AI?

- AI Rigs Hub — All Benchmarks & Comparisons

- Apple M4 Max vs RTX 4090 for Local AI

— SpecPicks Editorial · Last verified April 24, 2026